When implementing new storages or configure existing ones it is a good idea to check paths (state and amount) to volumes. When operating a few hosts using a few datastores this can be a very time-consuming task when using the GUI. Therefore I wrote the following function.

putty cannot connect to 3PAR after upgrading to 3.3.1 MU3

Recently I upgraded a new 3PAR from 3.2.2 MU6 to 3.3.1 MU3. Even during the upgrade I could use me putty to run commands in 3PAR console. After the upgrade process activated 3.3.1 MU3, no putty session could be established any more. When trying to connect, the error appears: Network error: Software caused connection abort It turned out this is because no older TLS version […]

Errors when installing SimpliVity Deployment Manager

To deploy new HPE SimpliVity nodes, you need to run Deployment Manager. Current version at writing: 3.7.6.244. Requirements to run Deployment Manager are: .NET 4.7.1 and Java 1.8. When .NET is not installed during installation, wizard links to Microsoft and starts the download. When Java is not installed or too old, an message is shown at first start. Sounds very simple. Anyway I had some […]

3PAR: configure AFC with SSDs only for AFC

When initialize a new 3PAR, depending on your protection level, at least the size of one disk per disk-type will be reserved as spare. Also when you add disks to a running system, spare-chunklets are created. This is done by admithw. For example when you initialize a system containing 2 SSDs for adaptive flash cache (AFC) the size of one SSD is reserved for spare. […]

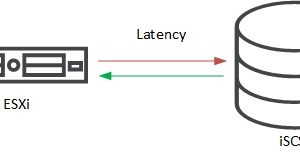

3PAR (iSCSI) – resolve high write latency on ESXi hosts

FC AFA (all flash array) 3PAR systems show quite a good latency on reads and writes. When operating an iSCSI AFA 3PAR it could happen that the systems show a rather high write latency on ESXi hosts. In this post you can read how to fix this.

Backup from snapshot on target 3PAR

In a 3PAR Peer Persistence configuration volumes are synchronously replicated between two 3PAR arrays. For each volume one array acts as primary or source array. This array exports the volume for read/write. The other array acts as secondary or target for replication. For performance reasons it could be an advantage when backup reads data from target array. This is easily possible with Veeam B&R 9.5.

3PAR – does CPG setsize to disk-count ratio matter?

In a 3PAR system it is best practice that setsize defined in CPG is a divisor of the number of disks in the CPG. The setsize defines basically the size of a sub-RAID within the CPG. For example a setsize of 8 in a RAID 5 means a sub-RAID uses 7 data and one parity disk.

Remove a cage from a 3PAR array

To remove a cage from a 3PAR array can be a simple task. But it can also be impossible without help from HPE. Before starting at point 1, you should probably check point 3 first. Generally I would recommend to do any of these steps ONLY when your are very familiar to 3PAR systems! know what you are doing! know what are the consequences of […]

3PAR: Considerations when mixing sizes of same disk type

Generally it is supported to mix disks of different sizes of the same type within a 3PAR system. For example you can use 900GB and 1.2TB FC-disks – within the same cage and even within the same CPG. When a disk fails, HPE sends an replacement disk. Some time ago, stock of 900GB FC disks seem to be empty. So when a 900GB disk fails, […]

Create CPG using Disk Filter

Recently I had to create a new 3PAR CPG using just new added 1.2TB disks. But the system uses already a cage full of 600GB disk. While it was straight forward to change the existing CPG (using 3PAR Management Console) – StoreServe Management Console (SSMC) does not support this feature any more) to use all disk in cage 0, it was not possible to create a […]