How to isolate NBD backup traffic in vSphere

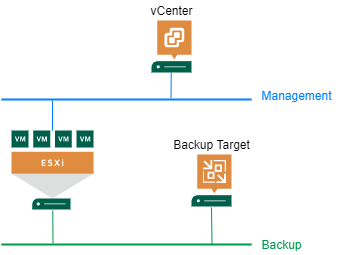

A new feature in vSphere 7 is the ability to configure a VMkernel port used for backups in NBD (Network Block Device) respectively Network mode. This can be used to isolate backup traffic from other traffic types. Up to this release, there was no direct option to select VMkernel port for backup. In this post I show how to isolate NBD backup traffic in vSphere. In my demo I use Veeam Backup&Recovery (VBR) as example solution.

Configuration

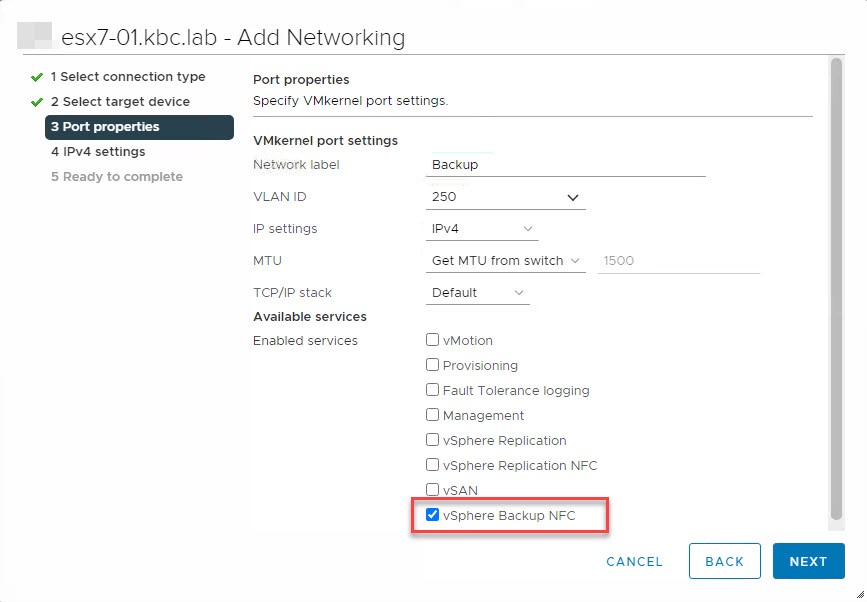

It is quite simple to configure backup traffic isolation. With vSphere 7 there is a new service tag for Backup: vSphere Backup NFC. NFC stands for Network File Copy. By selecting this, vSphere will return the IP address of this port when the backup software asks for ESXi hosts address.

So all you need to do is to add a new VMkernel port, enable Backup service and set IP and VLAN ID. Host does not need to be rebooted. Now NBD backup traffic will be routed through this port.

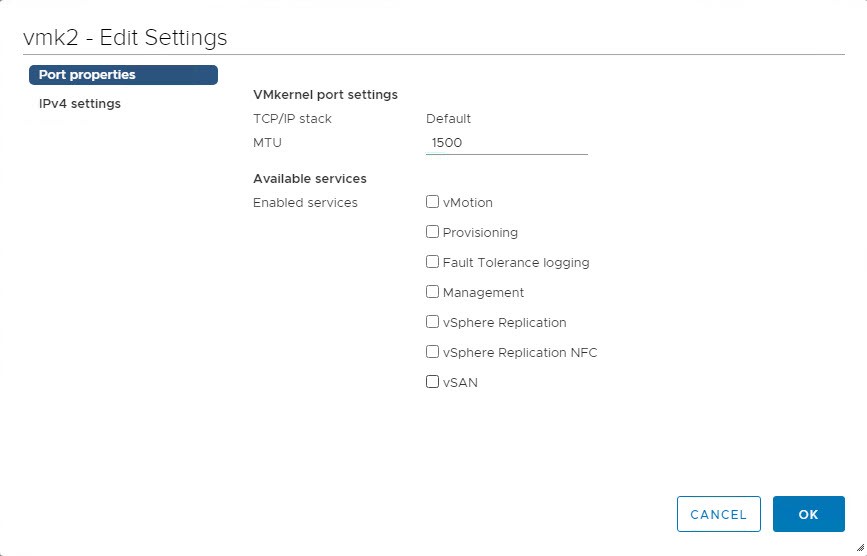

Compared to available services in vSphere 6.7, you see this one missing. No other service in 6.7 would re-route backup traffic.

How does it look like

For verification there are a few options. You can check log file or monitor ESXi network throughput. On my demo ESXi host, I created a VMkernel port vmk2 with backup tag. IP of this port is 10.10.250.1.

Log file

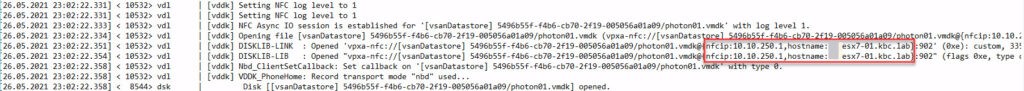

Probably your backup software create logs for each backup process. In case of VBR, a few log files are created. To get the information, what IP is used for backup traffic, you can search in log files: Agent.job_name.Source.VMDK_name.log in directory C:\ProgramData\Veeam\Backup\job_name of VBR server.

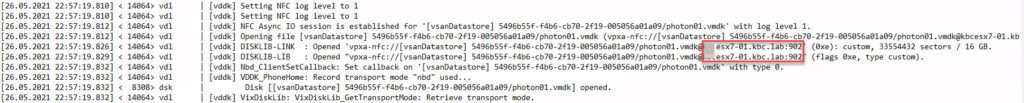

In the appropriate log file, you see IP address, returned from vCenter to connect for backup traffic. Here is a screenshot with enabled backup tag.

Compared to no tagged network port:

Monitor ESXi network

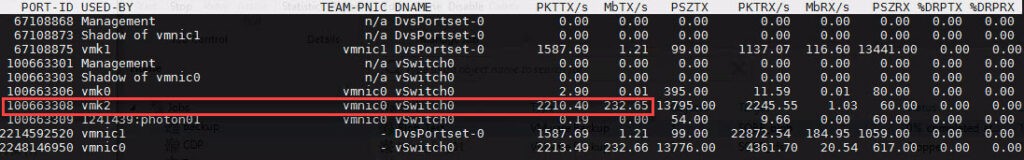

For realtime-monitoring I prefer esxtop in ESXi console. In this screenshot you see backup traffic on vmk2:

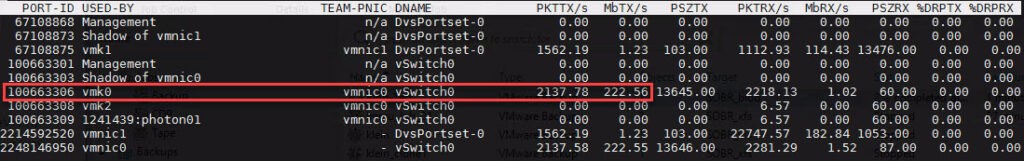

Compared to no tagged port for backup:

Veeam preferred Network

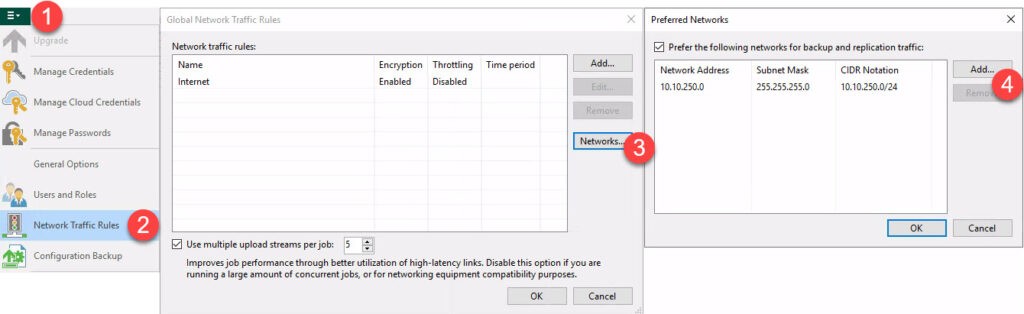

Question may arise, if it is possible to select ESXi VMkernel port with VBR natively. For this, I tried to use Preferred Networks to define my network of choice.

Answer: this does not work, backup traffic is still routed through the default management port of the host.

What about other way round: can preferred networks prevent the selection via tagging VMkernel port? The answer here is also no. Even if management network is configured as preferred, tagged port is used.

Command line

For scripting you have at least two options: ESXi console and PowerShell with PowerCLI modules.

In ESXi console you can use the command esxcli. I will use port name vmk2. In order to show current tag of a VMkernel port run:

esxcli network ip interface tag get -i vmk2

The following command show all VMkernel interfaces:

esxcli network ip interface list

To add backup tag to port vmk2, run:

esxcli network ip interface tag add -i vmk2 -t vSphereBackupNFC

To remove backup tag from port, run:

esxcli network ip interface tag remove -i vmk2 -t vSphereBackupNFC

At the date of writing I did not found a direct way to use PowerCLI for showing of setting the necessary tag. But you can use get-esxcli cmdlet. With it you can use the same commands as just shown. How to use get-esxcli, I described here under Tips and Tricks. To see it in more detail, see this post about how to create PSP rules.

Conclusion

I hope I could show how simple it is to isolate NBD backup traffic in vSphere 7.

Notes

- Get your trial of Veeam Backup&Replication!

- Other backup-specific new features in vSphere 7 U1 you can find here.

- Although backup traffic is leveraging defined VMkernel port, control traffic for backup (snapshot creation and so on) is still using the management port.

Hi,

Please let me know if vmk0 and vmk2 are in your setup in different subnets and if Veeam in the same subnet (L2) as vmk2.

I’ve tried vmk0 and vmk2 with IPs from the same subnet and Veeam is still using vmk0.

Since “vSphereBackupNFC” uses the default TCP/IP stack it probably wants to use the default gateway of vmk0.

Therefore I assume that vmk2 and the backup server must be in the same segment, which is different from that of vmk0.

tank you!

Hi!

Thanks for your interesting question!

In my test-setup, my backup server has a IP addresses in each network: management (vmk0) and backup (vmk2). Because of your (and a customers) question, I tried to change the IP of vmk2 to a network, that is not reachable from my backup server. This should shows:

– When vSphere returns this address, routing is the task of the backup system.

– When vSphere returns the IP of vmk0, VMware checks this subnet-missmatch and returns a IP that is reachable. From this would follow, this feature is not route-able.

Actually, changed IP was returned in my test and the backup failed because there was no way for the backup server to access the ESXi! Next step should be to test routing directly. To setup this I will need more time.

Can you please tell me what backup software you use? Maybe there are different behaviors with this too.

Hi VNOTE42,

I’m running Veeam v11 and I’m quite sure that the backup solution is not to blame.

I still have to do a few L3 tests and will then share my results.

Hi VNOTE42,

After extensive testing with preconfigured and custom TCP/IP stacks, the situation is as follows.

The service tag “vSphere Backup NFC” only works with the default TCP/IP stack, which is why the backup server must be accessible via L2.

So you can only isolate the traffic if the backup server is reachable via L2 from the “vSphere Backup NFC” vmk interface.

If the backup server is connected via L3, the connection is made via vmk0 and its gefault gateway.

However, this does not isolate the backup traffic and makes the additional vmk interface obsolete.

In this example vmk0 and vmk2 should to be in different subnets. And VBR should have a secondary nic (vlan, physical etc) with ip and connection to the same network as vmk2. Then VBR (the servers network stack) will chose to use the secondary nic as it’s the shortest way to vmk2 (1 jump).

Is the issue around this still relevant? Is it still not recommended to use this feature?

I have not tested the function for a while. When you have no problem with the current state, I think it is safe to use.